In this blog, we explain what Delta Lake is, how it works, its main features, real business benefits, and common use cases across industries.

As companies collect more data, they often face the same problem. Data pipelines break. Reports do not match. Data quality drops over time. Teams lose trust in dashboards and analytics. Many organisations build data lakes to store large volumes of data, but without proper controls, these lakes become messy and unreliable.

This is where Delta Lake plays an important role. Delta Lake helps organisations keep data clean, consistent, and reliable while still using low cost cloud storage. It is a key part of modern data platforms and lakehouse architectures.

In this blog, we explain what Delta Lake is, how it works, its main features, real business benefits, and common use cases across industries.

What Is Delta Lake

Delta Lake is an open storage format that brings reliability and structure to data lakes. It is designed to work on top of cloud object storage such as Azure Data Lake Storage, Amazon S3, or Google Cloud Storage.

Delta Lake adds features that traditional data lakes do not have, such as:

Data consistency

Schema enforcement

Transaction support

Version control

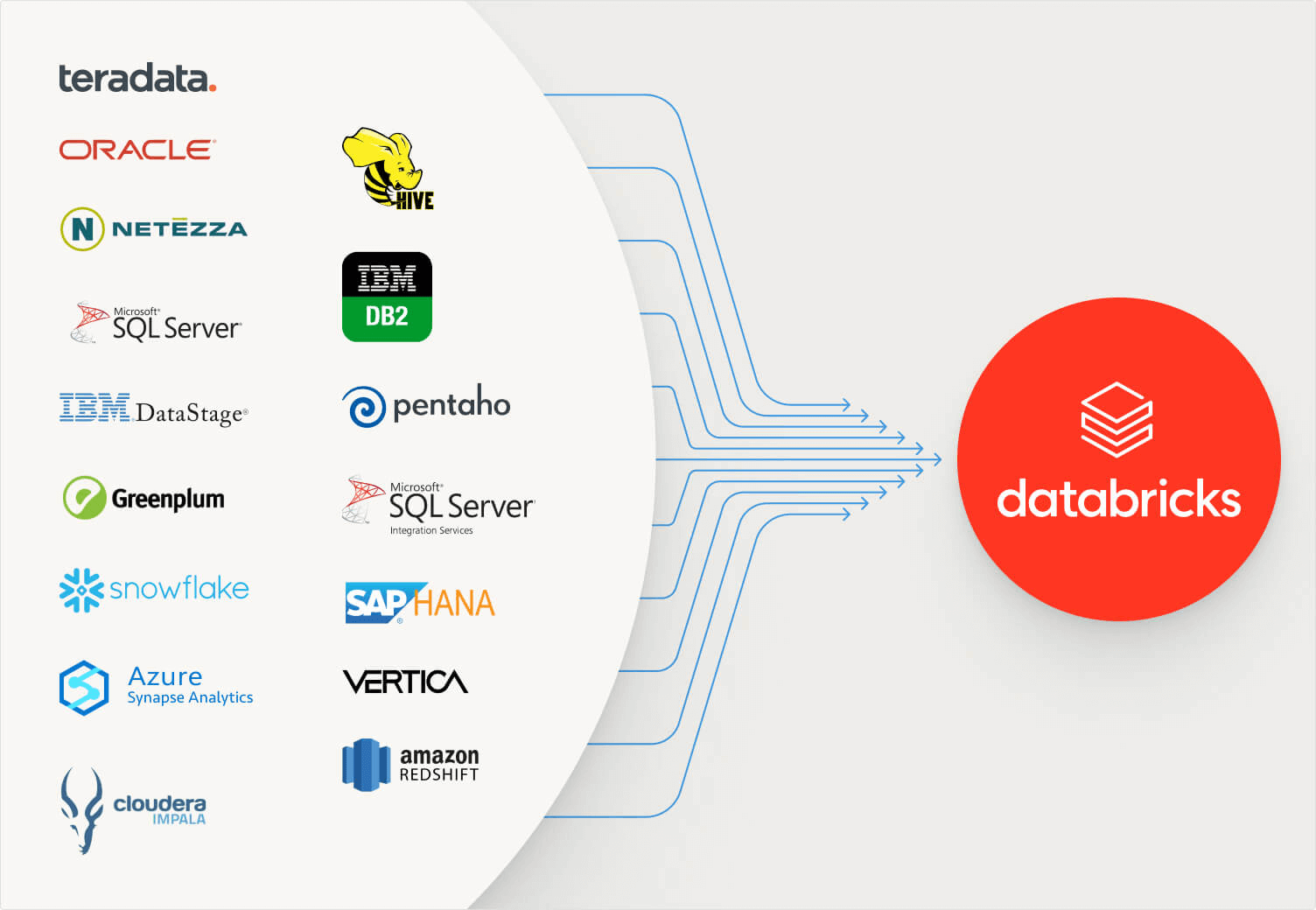

It is widely used with platforms like Databricks but can also work with other Spark-based systems.

Why Traditional Data Lakes Struggle

Before understanding Delta Lake, it helps to see why many data lakes fail.

Common Data Lake Problems

Traditional data lakes often suffer from:

No transaction support

Corrupt or partial writes

Broken pipelines

Inconsistent schemas

Difficult data recovery

When multiple pipelines write to the same data, errors can happen. When schemas change, dashboards break. Over time, teams stop trusting the data.

These problems slow down analytics and block AI projects.

How Delta Lake Works

Delta Lake solves these issues by adding a transaction log on top of cloud storage.

Every change to the data is recorded in a log. This log tracks:

What data was added

What data was changed

What data was deleted

Because of this log, Delta Lake can manage data updates safely and consistently.

Core Features of Delta Lake

Delta Lake includes several powerful features that improve data reliability and performance.

ACID Transactions

One of the most important features of Delta Lake is ACID transactions.

This means:

Data writes are all or nothing

Partial or failed writes do not corrupt data

Multiple jobs can safely write at the same time

This is critical for production data pipelines where reliability matters.

Schema Enforcement

Delta Lake checks incoming data against the expected schema.

If data does not match, Delta Lake can:

Reject bad data

Alert teams about schema issues

This prevents silent data quality problems and broken reports.

Schema Evolution

When business needs change, data schemas change too. Delta Lake allows controlled schema updates.

Teams can:

Add new columns safely

Manage schema changes without breaking pipelines

This makes platforms more flexible over time.

Time Travel and Versioning

Delta Lake keeps a history of all data changes.

With time travel, teams can:

Query old versions of data

Recover from mistakes

Debug pipeline issues

Reproduce reports

This feature is extremely valuable for audits and investigations.

Upserts and Deletes

Traditional data lakes are good at appending data but struggle with updates.

Delta Lake supports:

Updates

Deletes

Merge operations

This makes it easier to handle use cases like change data capture and slowly changing dimensions.

Performance Optimisation

Delta Lake stores data in efficient formats and supports optimisations such as:

Data compaction

Partition pruning

Indexing support

These features improve query speed and reduce cost.

Benefits of Using Delta Lake

Delta Lake delivers clear benefits to both technical and business teams.

Improved Data Reliability

With transaction support and schema checks, data becomes more trustworthy. Teams stop chasing errors and fixing broken pipelines.

Reliable data builds confidence across the organisation.

Lower Operational Risk

Time travel and versioning reduce risk. If something goes wrong, data can be restored easily. This lowers stress during deployments and updates.

Faster Analytics

Optimised storage and query execution lead to faster dashboards and reports. Business users get answers quicker.

Better Support for AI and Machine Learning

AI models need clean and consistent data. Delta Lake ensures training data stays reliable over time.

This improves model accuracy and repeatability.

Reduced Cost

Delta Lake uses low cost cloud storage while providing warehouse like features. This reduces the need for expensive duplicate systems.

Delta Lake in the Lakehouse Architecture

Delta Lake is a core component of the lakehouse architecture.

In a lakehouse:

Raw data is stored in cloud storage

Delta Lake adds reliability and structure

Analytics and AI run on the same data

This removes the need to move data between separate lakes and warehouses.

Common Delta Lake Use Cases

Delta Lake is used across many industries and scenarios.

ETL and ELT Pipelines

Delta Lake is ideal for building data pipelines.

Teams use it to:

Ingest raw data

Clean and standardise data

Apply business rules

Serve analytics and reports

Its reliability makes pipelines easier to manage.

Streaming Data Processing

Delta Lake supports streaming data.

Common use cases include:

IoT data ingestion

Sensor data processing

Event data pipelines

Streaming and batch data can be handled in one system.

Change Data Capture

Delta Lake supports merge operations which makes it suitable for CDC.

It can handle:

Updates from source systems

Deletes and corrections

Slowly changing data

This is important for operational reporting.

Business Intelligence and Dashboards

Delta Lake provides fast and reliable data for BI tools.

Dashboards built on Delta Lake:

Refresh faster

Break less often

Show consistent numbers

This improves trust in analytics.

Machine Learning Training Data

Delta Lake is often used to store training datasets.

Benefits include:

Clean and versioned data

Reproducible experiments

Easier model debugging

This supports strong MLOps practices.

Industry Examples of Delta Lake

Energy

Energy companies use Delta Lake to manage:

Sensor and IoT data

Asset performance metrics

Forecasting inputs

Reliable pipelines support real time monitoring and predictive maintenance.

Healthcare

Healthcare organisations use Delta Lake to manage:

Clinical data

Operational metrics

Compliance reporting

Time travel helps with audits and investigations.

Retail and eCommerce

Retailers use Delta Lake to:

Track transactions

Analyse customer behaviour

Power recommendation systems

Reliable data improves personalisation.

Finance

Financial firms use Delta Lake for:

Risk reporting

Transaction analysis

Regulatory compliance

Strong consistency and auditability are critical.

Best Practices for Using Delta Lake

To get the most value from Delta Lake, teams should follow best practices.

Use clear data layers such as Bronze, Silver, and Gold

Apply schema enforcement early

Monitor data quality continuously

Avoid large unpartitioned tables

Use merge operations carefully

Retain history based on business needs

Good design improves performance and stability.

Common Mistakes to Avoid

Some common mistakes include:

Treating Delta Lake like simple file storage

Ignoring schema changes

Running full refreshes unnecessarily

Skipping monitoring and alerts

Avoiding these issues improves long term success.

Why Delta Lake Matters for Modern Data Platforms

Delta Lake bridges the gap between flexibility and reliability. It allows teams to keep data in low cost storage while adding controls needed for production workloads.

For organisations building analytics and AI platforms, Delta Lake is often a foundational technology.

Conclusion: How Tenplus Helps You Succeed With Delta Lake

Delta Lake provides powerful features, but success depends on how it is implemented. Poor design can still lead to slow performance and data quality issues.

Tenplus helps organisations:

Design Delta Lake based architectures

Build reliable ETL and streaming pipelines

Apply Medallion architecture correctly

Implement governance and monitoring

Optimise performance and cost

Prepare data platforms for AI

Tenplus also offers a Free 15 day Proof of Concept so teams can see Delta Lake working with their own data before making a larger commitment.

If your organisation wants to build a reliable, scalable, and AI ready data platform using Delta Lake, Tenplus makes the journey simpler and safer.

FAQs

1. What is Delta Lake used for?

Delta Lake is used to make data lakes more reliable. It helps teams store data safely, manage updates, prevent broken pipelines, and keep analytics and reports consistent.

2. How is Delta Lake different from a traditional data lake?

A traditional data lake only stores files. Delta Lake adds transaction support, schema checks, version history, and better performance, which makes data easier to trust and manage.

3. Is Delta Lake only used with Databricks?

Delta Lake is commonly used with Databricks, but it is an open format and can work with other Spark-based data platforms that support Delta tables.