In this blog, we explain ETL vs ELT in simple terms, highlight their differences, and show how Databricks fits into both patterns.

Modern companies collect data from many systems. This data comes from applications, sensors, databases, APIs, and sometimes even spreadsheets. To make this data useful for analytics and AI, it must be processed and transformed. This is where ETL and ELT play an important role.

ETL and ELT are two common ways to move and prepare data. The choice between them can affect cost, speed, data quality, and how well a company can adopt machine learning or AI. Databricks supports both ETL and ELT, but it does so in a modern way that takes advantage of cloud computing and the lakehouse architecture.

In this blog, we explain ETL vs ELT in simple terms, highlight their differences, and show how Databricks fits into both patterns.

What Is ETL

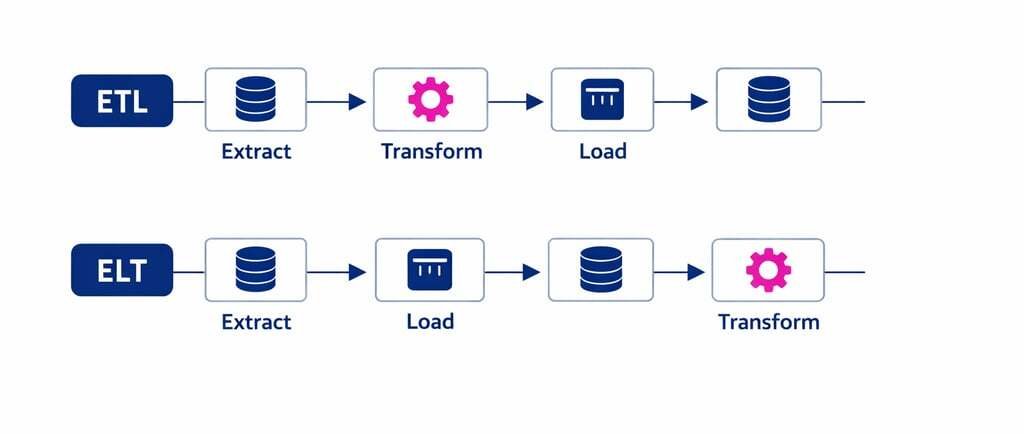

ETL stands for Extract, Transform, Load.

The steps are:

Extract data from source systems

Transform the data into a usable format

Load the data into the target system

ETL was the main pattern in traditional data warehouse systems. Transformations happened before loading to ensure the warehouse stored clean and structured data.

This made sense when compute power was limited and storage was expensive. Companies needed strong control before data entered the warehouse.

What Is ELT

ELT stands for Extract, Load, Transform.

The steps are:

Extract data from source systems

Load the data into the target system

Transform data inside the target system

ELT became popular with cloud data platforms because compute and storage became cheap and elastic. Instead of transforming data first, the platform can store raw data and transform it later.

ELT gives more flexibility and supports analytics, machine learning, and iterative workloads.

Key Differences Between ETL and ELT

The main difference is where and when transformations happen.

In ETL:

Transform early

Store only curated data

In ELT:

Load early

Transform later inside the platform

This choice changes how teams structure pipelines and manage data.

Why ELT Became Popular in Cloud Platforms

Cloud systems changed the economics of data. Storage became cheap. Compute became elastic. Users wanted more freedom to explore data.

ELT supports this model because raw data is available for many use cases. Different teams can apply their own transformations for reporting, operations, or AI.

ETL vs ELT in Databricks

Databricks supports both ETL and ELT, but its architecture is more aligned with ELT due to the lakehouse approach.

With Databricks, raw data is loaded into cloud storage, often in a Bronze layer. Transformations happen in Silver and Gold layers. This matches the ELT workflow.

However, Databricks also supports ETL for cases such as compliance, filtering, or sensitive data removal before storage.

Databricks and the Medallion Architecture

Databricks introduced the Medallion architecture to organize data transformations in layers:

Bronze: raw data

Silver: cleaned and structured data

Gold: refined, aggregated, or business ready data

This layered model matches ELT logic. Data is extracted and loaded into Bronze. Transformations happen within Databricks to create Silver and Gold.

This approach gives companies flexibility and supports machine learning, analytics, and real time use cases.

ETL Workloads on Databricks

Some ETL scenarios remain useful. Examples include:

Removing sensitive data before storage

Filtering noisy data from sensors

Applying strict transformations for compliance

Cleaning data from legacy systems

ETL pipelines may be built with tools such as:

Databricks Jobs

Spark Structured Streaming

Databricks Workflows

Azure Data Factory or AWS Glue for pre processing

In these cases, transformation happens before writing to the lake.

ELT Workloads on Databricks

Most companies use Databricks for ELT because it allows:

Faster iteration for analytics

Support for multiple transformations

Exploration and discovery

Machine learning preparation

Time travel and version control

Raw data enters the lake first and is transformed inside Delta Lake tables.

Delta Lake and Transformation Flexibility

Delta Lake plays an important role in ELT. It provides:

Transaction support

Schema enforcement

Time travel

Merge operations for CDC

Efficient storage

These features allow transformations to happen after loading without losing control or quality.

ETL vs ELT for Machine Learning

Machine learning workloads prefer ELT because:

Data scientists need raw data

Training data changes often

Feature engineering requires many transformations

Historical versions must be stored

ETL removes raw data too early, which limits ML flexibility.

ETL vs ELT for Business Intelligence

BI teams care about consistency and correctness. ELT helps because multiple curated data sets can be created from a single raw source.

However, strict BI warehouses sometimes still use ETL to enforce a single version of the truth.

Databricks supports both patterns, which gives BI teams choice.

ETL vs ELT for Real Time Scenarios

Sensor, IoT, and event driven data often works best with ELT. Raw events are ingested, then transformations add structure.

Energy companies, healthcare networks, and logistics operations use ELT to support:

Live dashboards

Operational decision systems

Predictive maintenance

Forecasting models

ETL can also work for real time but is less flexible.

Cost Considerations

Cloud economics make ELT attractive, but cost is still a factor.

ETL may reduce storage cost by discarding data early. ELT increases storage but reduces engineering cost and speeds development.

Databricks allows companies to control cost by using:

Cluster autoscaling

Spot pricing

Efficient file storage

Optimised Delta tables

Cost strategy depends on workload type.

Security Considerations

Security concerns affect ETL vs ELT choice.

For example:

If raw data contains sensitive fields

If compliance rules demand pre processing

If PII must be removed

ETL may be required to clean data before loading.

Unity Catalog and Delta sharing provide governance for ELT workloads on Databricks.

Performance Considerations

ELT can be faster for development because transformations use scalable compute. ETL performance often depends on external tools.

Databricks can scale transformations across many nodes, which improves speed.

Common Enterprise Scenarios

Here are common patterns companies follow:

Scenario 1: ETL for Compliance

Data filtered before storage for industries like healthcare or finance.

Scenario 2: ELT for Analytics

Raw data stored in Bronze for shared analytics.

Scenario 3: ELT for ML

Raw and historical data kept for feature engineering.

Scenario 4: Hybrid

Some fields cleaned with ETL, rest processed through ELT.

Databricks supports all four.

Choosing Between ETL and ELT

Business leaders should consider:

Data sensitivity

Workload type

Compliance needs

ML requirements

Speed to insight

Storage cost

Engineering resources

Companies building AI platforms should favor ELT for flexibility.

Databricks as a Unified Platform for ETL and ELT

One major advantage of Databricks is its unified architecture. It supports:

Batch processing

Streaming

BI analytics

Governance

Collaboration

This allows companies to avoid disconnected tools and duplicated data.

Why the Industry Is Moving to ELT

Several factors explain the move toward ELT:

Storage is cheap

Compute is scalable

AI models need raw data

Time travel improves debugging

Governance tools simplify access

Collaboration needs flexibility

Modern data platforms align with ELT more than ETL.

Conclusion: How Tenplus Helps Companies Navigate ETL vs ELT Decisions

ETL and ELT are not competitors. They are patterns that support different needs. Databricks gives companies the freedom to use both. The right choice depends on the workload, compliance rules, and desired outcomes.

Tenplus helps organisations design modern data platforms with Databricks using ELT and ETL in the right places. Tenplus supports data pipeline design, Delta Lake modeling, security, governance, and machine learning workloads. For companies that want to move beyond siloed data and build AI ready platforms, Tenplus makes the journey faster and safer. Tenplus also offers a free 15 day Proof of Concept so teams can validate Databricks and platform design with real data.

For leaders planning to modernise data systems, ELT on Databricks provides flexibility, speed, and scale while still supporting the structured control that ETL has provided for years.

FAQs

1. What is the main difference between ETL and ELT?

ETL transforms data before loading it into the target system. ELT loads raw data first, then transforms it inside the target platform. The order of the steps is the key difference.

2. Why is ELT more common in cloud platforms like Databricks?

Cloud platforms have cheap storage and flexible compute. ELT takes advantage of this by loading raw data first and then transforming it using scalable compute, which supports analytics and AI better.

3. Does Databricks support both ETL and ELT?

Yes. Databricks supports both ETL and ELT. Most companies use ELT because it matches the lakehouse design, but ETL is useful for compliance or sensitive data scenarios.

4. Which approach is better for machine learning?

ELT is better for machine learning because data scientists need raw data for feature engineering and training. ELT keeps historical and detailed data available for experiments.

5. How do companies choose between ETL and ELT?

The choice depends on data sensitivity, compliance rules, performance, cost, and how fast the business needs insights. Many companies use a hybrid model that mixes both.