Modern businesses generate large volumes of data every day. This data comes from applications, sensors, APIs, databases, and files. To turn this raw data into useful insights, companies need a reliable ETL pipeline.

Databricks is one of the most powerful platforms to build scalable data pipelines. It supports distributed processing, structured storage, and automation in one environment.

In this guide, you will learn how to build an ETL pipeline in Databricks step by step. We will cover architecture, ingestion, transformation, storage, orchestration, and monitoring.

- What Is an ETL Pipeline

- Why Use Databricks for ETL

- Step 1: Define the ETL Pipeline Architecture

- Step 2: Extract Data in Databricks

- Step 3: Load Raw Data into Bronze Layer

- Step 4: Transform Data into Silver Layer

- Step 5: Create Gold Layer for Analytics

- Step 6: Automate the ETL Pipeline

- Step 7: Handle Incremental Loads

- Step 8: Monitor the ETL Pipeline

- Step 9: Optimize Performance

- Step 10: Secure Your ETL Pipeline

- Best Practices for Building an ETL Pipeline in Databricks

- Common Challenges in ETL Pipelines

- Real Use Cases of ETL Pipelines in Databricks

- ETL vs ELT in Databricks

- Why Proper Design Matters

- How Tenplus Helps You Build Scalable ETL Pipelines

- FAQs

What Is an ETL Pipeline

ETL stands for Extract, Transform, and Load.

An ETL pipeline performs three main steps:

- Extract data from source systems

- Transform data into a usable format

- Load the processed data into a target system

In Databricks, ETL pipelines are built using Spark, Delta Lake, and workflow automation tools.

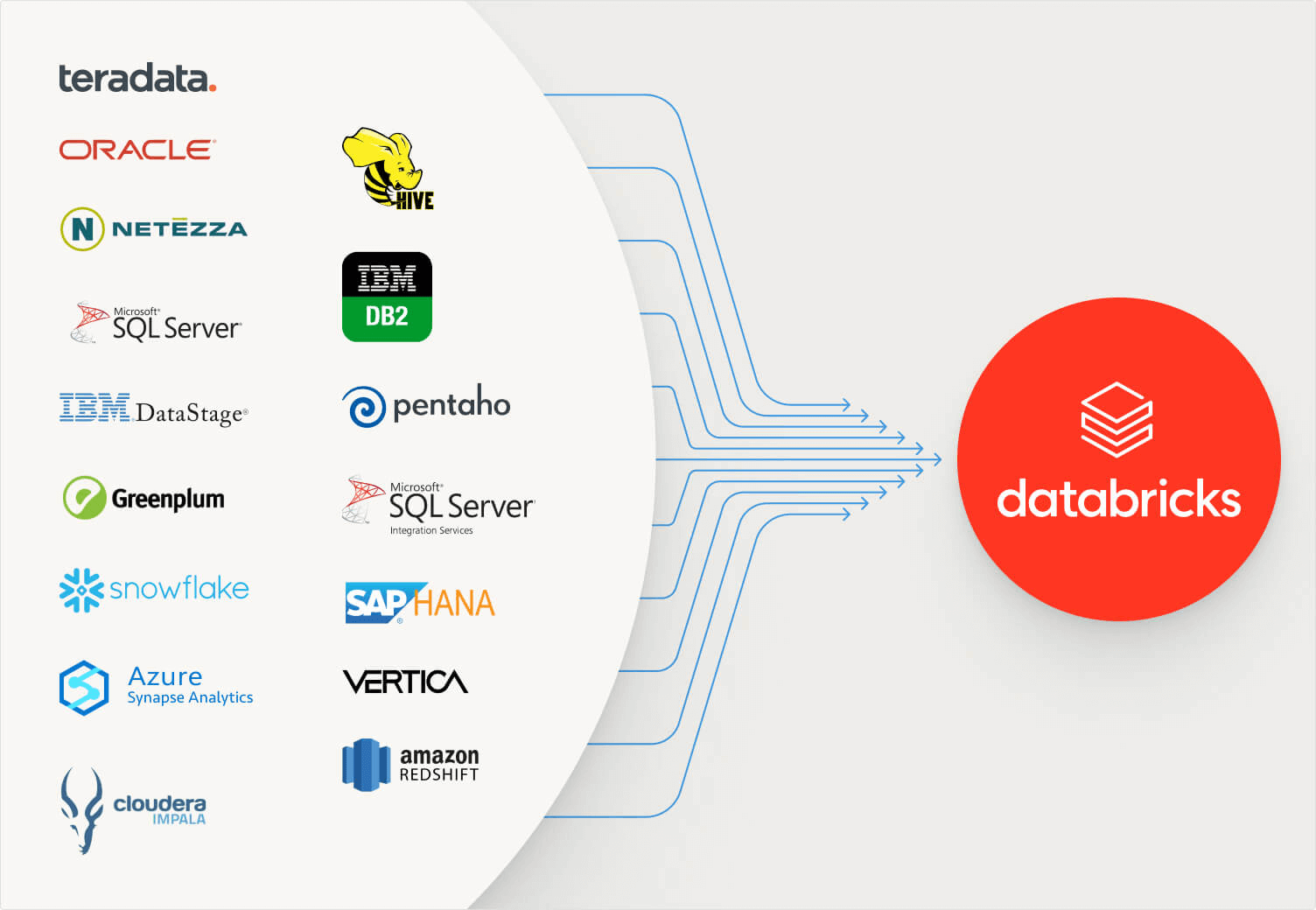

Why Use Databricks for ETL

Databricks provides several advantages for building an ETL pipeline:

- Scalable distributed computing

- Integration with cloud storage

- Built-in support for Delta Lake

- Easy notebook-based development

- Job scheduling and automation

- Integration with governance tools

Databricks is suitable for both batch and streaming pipelines.

Step 1: Define the ETL Pipeline Architecture

Before writing code, define your architecture.

A common architecture includes:

Data Sources

- Databases

- APIs

- IoT devices

- Flat files

Ingestion Layer

- Auto Loader

- Spark batch reads

- Streaming connectors

Storage Layer

- Delta tables in Bronze, Silver, Gold structure

Orchestration Layer

- Databricks Workflows

- Jobs scheduler

Planning your architecture reduces rework later.

Step 2: Extract Data in Databricks

Extraction depends on your source.

Extract from Cloud Storage

df = spark.read.format(“csv”).option(“header”, “true”).load(“/mnt/raw/sales.csv”)

Extract from Database

df = spark.read.format(“jdbc”) \

.option(“url”, “jdbc:sqlserver://server”) \

.option(“dbtable”, “dbo.sales”) \

.option(“user”, “username”) \

.option(“password”, “password”) \

.load()

Extract Streaming Data

df = spark.readStream.format(“cloudFiles”) \

.option(“cloudFiles.format”, “json”) \

.load(“/mnt/streaming/”)

This completes the extract phase of your ETL pipeline.

Step 3: Load Raw Data into Bronze Layer

The Bronze layer stores raw data without heavy transformation.

df.write.format(“delta”).mode(“append”).saveAsTable(“bronze_sales”)

Using Delta format ensures transaction reliability and schema enforcement.

The Bronze layer acts as your source of truth.

Step 4: Transform Data into Silver Layer

Now transform the data.

Common transformations include:

- Removing duplicates

- Standardizing column names

- Casting data types

- Filtering invalid records

Example transformation:

from pyspark.sql.functions import col

silver_df = df.dropDuplicates() \

.withColumn(“amount”, col(“amount”).cast(“double”))

silver_df.write.format(“delta”).mode(“overwrite”).saveAsTable(“silver_sales”)

The Silver layer contains clean and structured data.

Step 5: Create Gold Layer for Analytics

The Gold layer contains aggregated and business-ready data.

Example:

gold_df = silver_df.groupBy(“region”).sum(“amount”)

gold_df.write.format(“delta”).mode(“overwrite”).saveAsTable(“gold_sales_summary”)

This data is now ready for dashboards and reporting tools.

Step 6: Automate the ETL Pipeline

Manual execution is not practical for production.

Databricks allows automation using Jobs and Workflows.

Create a Job

- Go to Workflows

- Create a new job

- Add tasks

- Schedule execution

You can define dependencies between tasks such as Bronze to Silver to Gold.

This ensures your ETL pipeline runs automatically.

Step 7: Handle Incremental Loads

Most systems require incremental updates instead of full reloads.

Use merge for incremental processing:

MERGE INTO silver_sales AS target

USING bronze_sales AS source

ON target.order_id = source.order_id

WHEN MATCHED THEN UPDATE SET *

WHEN NOT MATCHED THEN INSERT *

Incremental processing reduces cost and improves performance.

Step 8: Monitor the ETL Pipeline

Monitoring ensures reliability.

Use:

- Job run history

- Spark UI

- Cluster metrics

- Alerts

Databricks allows integration with monitoring systems for notifications.

Logging is critical for production pipelines.

Step 9: Optimize Performance

To improve ETL pipeline performance:

Use Partitioning

Partition large tables by date or region.

Run Optimize

OPTIMIZE silver_sales;

Use Autoscaling Clusters

Enable autoscaling to adjust compute resources automatically.

Avoid Small Files

Combine small files to improve read speed.

Performance tuning reduces cost and improves reliability.

Step 10: Secure Your ETL Pipeline

Security is critical for business data.

Use:

- Role-based access control

- Unity Catalog for governance

- Secret scopes for credentials

- Network policies

Security must be part of pipeline design.

Best Practices for Building an ETL Pipeline in Databricks

Follow these practices:

- Use Medallion architecture

- Separate compute and storage

- Use Delta format

- Implement incremental logic

- Schedule regular optimization

- Monitor failures proactively

- Document data transformations

A structured design ensures long-term scalability.

Common Challenges in ETL Pipelines

Many organizations face:

- Data schema changes

- Duplicate records

- Slow processing

- Unreliable job scheduling

- High cloud cost

Databricks solves many of these challenges, but correct implementation is important.

Real Use Cases of ETL Pipelines in Databricks

Energy Industry

Processing sensor and SCADA data for forecasting and optimization.

Healthcare

Centralizing patient data and operational reports.

Retail

Analyzing customer transactions and sales trends.

Finance

Building reporting systems with regulatory compliance.

An ETL pipeline is the backbone of modern analytics.

ETL vs ELT in Databricks

Databricks can support both ETL and ELT.

In ETL, transformation happens before loading into final storage.

In ELT, raw data is loaded first, and transformation happens inside the lakehouse.

Most modern Databricks architectures follow ELT patterns but the ETL pipeline concept still applies.

Why Proper Design Matters

A poorly designed ETL pipeline can cause:

- Data inconsistencies

- Broken dashboards

- High compute cost

- Slow query performance

A well-designed pipeline provides:

- Reliable insights

- Faster reporting

- Scalable architecture

- Reduced operational risk

Design determines long-term success.

How Tenplus Helps You Build Scalable ETL Pipelines

Building an ETL pipeline in Databricks requires technical expertise in Spark, Delta Lake, workflow orchestration, and cloud architecture.

Tenplus also offers a Free 15-Day Proof of Concept. Businesses can validate one real use case, test pipeline performance, and assess scalability before full implementation.

Whether you operate in energy, healthcare, retail, finance, or manufacturing, Tenplus builds reliable ETL pipelines that support analytics, AI, and long-term growth.

If your organization is planning to modernize data infrastructure, Tenplus provides the expertise to build secure, efficient, and production-ready ETL pipelines in Databricks.

FAQs

What is an ETL pipeline in Databricks?

An ETL pipeline in Databricks extracts data from source systems, transforms it using Spark, and loads it into Delta tables. It helps businesses organize data for analytics, reporting, and AI use cases.

Can Databricks handle large ETL workloads?

Yes. Databricks is built for distributed computing. It can process large volumes of batch and streaming data using scalable clusters and Delta Lake storage.

What is the difference between ETL and ELT in Databricks?

In ETL, data is transformed before loading into the final storage. In ELT, raw data is loaded first and transformed inside the lakehouse. Databricks supports both approaches depending on business needs.