Modern data platforms need storage that is reliable, fast, and easy to manage. Traditional data lakes store files, but they often lack transaction control, schema enforcement, and version tracking. This can cause data quality issues and broken pipelines.

This is where delta tables become important.

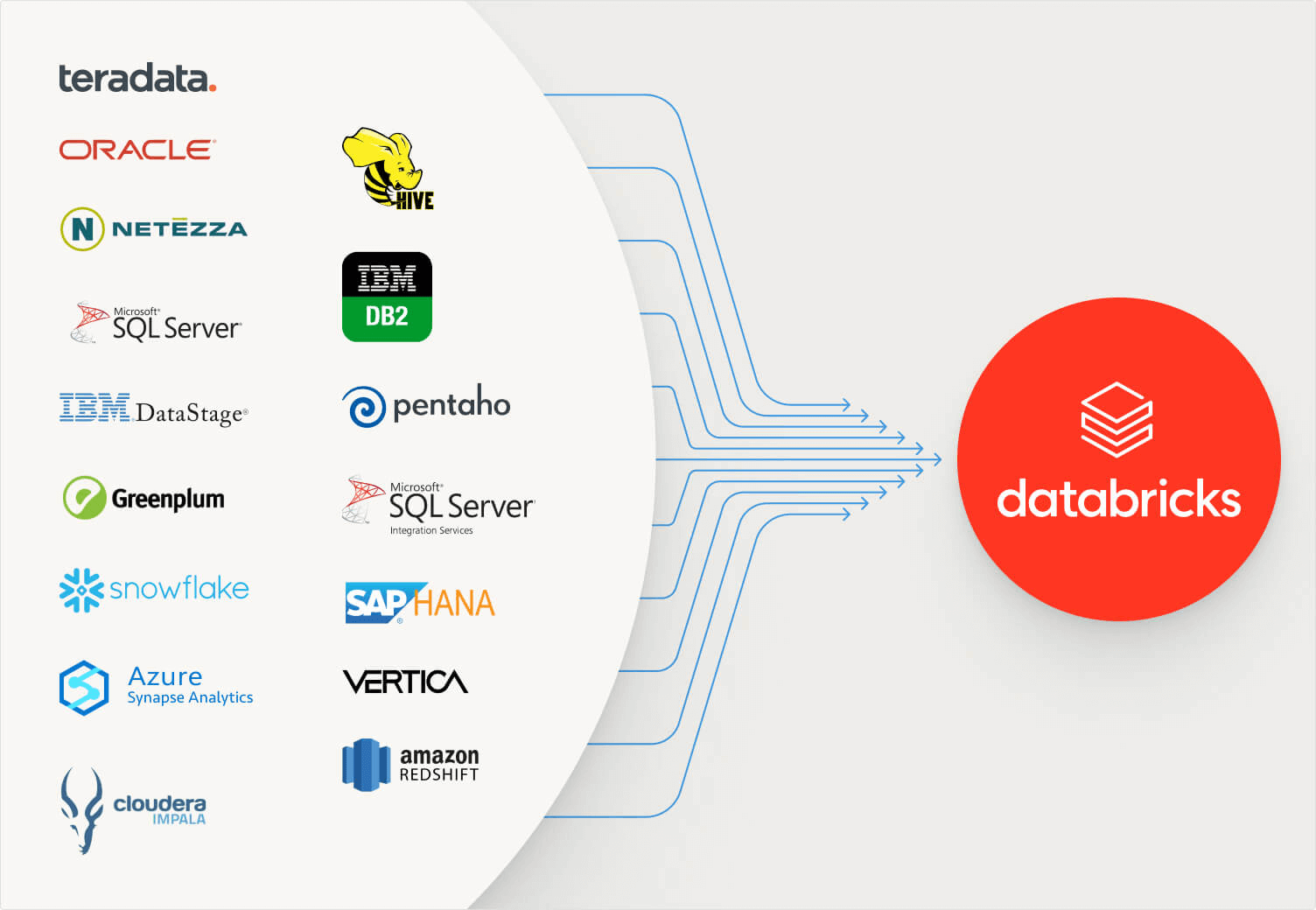

Delta tables combine the flexibility of data lakes with the reliability of data warehouses. They are built on Delta Lake and are widely used with platforms like Databricks.

In this guide, we explain how to use delta tables step by step. We cover creation, loading data, updating data, optimizing performance, and managing history.

- What Are Delta Tables

- Why Use Delta Tables

- Step 1: Create a Delta Table

- Step 2: Load Data into Delta Tables

- Step 3: Query Delta Tables

- Step 4: Update Data in Delta Tables

- Step 5: Delete Data from Delta Tables

- Step 6: Use Merge for Upserts

- Step 7: Enable Schema Enforcement

- Step 8: Use Time Travel

- Step 9: Optimize Delta Tables

- Step 10: Vacuum Old Files

- Best Practices for Using Delta Tables

- Delta Tables in Real Business Use Cases

- Delta Tables vs Parquet Tables

- Security and Governance with Delta Tables

- Common Mistakes to Avoid

- When Should You Use Delta Tables

- Conclusion: How Tenplus Helps You Implement Delta Tables Correctly

- FAQs

What Are Delta Tables

Delta tables are tables stored using the Delta Lake format. They sit on cloud storage such as Azure Data Lake, Amazon S3, or Google Cloud Storage.

Delta tables provide:

- ACID transactions

- Schema enforcement

- Schema evolution

- Time travel

- Merge operations

- High performance

Unlike simple Parquet tables, delta tables maintain a transaction log. This log tracks every change made to the data.

Why Use Delta Tables

Before learning how to use delta tables, it is important to understand their benefits.

Reliable Data Writes

Delta tables prevent partial writes and corruption. If a job fails, the table remains consistent.

Version History

Every update creates a new version. You can query old versions of data.

Easy Updates and Deletes

Unlike traditional data lakes, delta tables support updates and deletes.

Better Performance

Delta tables support file compaction and indexing optimizations.

Step 1: Create a Delta Table

You can create delta tables using SQL or Spark.

Using SQL

CREATE TABLE sales_data (

order_id INT,

customer_id INT,

amount DOUBLE,

order_date DATE

)

USING DELTA;

This command creates a managed delta table.

Using Spark

df.write.format(“delta”).save(“/mnt/delta/sales_data”)

This writes a DataFrame as a delta table.

Step 2: Load Data into Delta Tables

Once the table is created, you can insert data.

Insert Using SQL

INSERT INTO sales_data VALUES (1, 1001, 250.50, ‘2026-01-01’);

Append Using Spark

df.write.format(“delta”).mode(“append”).save(“/mnt/delta/sales_data”)

Append mode adds new records without overwriting existing data.

Step 3: Query Delta Tables

You can query delta tables like regular SQL tables.

SELECT * FROM sales_data;

Delta tables support fast queries because they use optimized storage formats.

Step 4: Update Data in Delta Tables

Delta tables support update operations.

UPDATE sales_data

SET amount = 300

WHERE order_id = 1;

This updates existing rows directly. Traditional data lakes do not allow this easily.

Step 5: Delete Data from Delta Tables

You can remove records safely.

DELETE FROM sales_data

WHERE order_id = 1;

Deletes are recorded in the transaction log.

Step 6: Use Merge for Upserts

Merge allows insert and update in one operation.

MERGE INTO sales_data AS target

USING new_sales AS source

ON target.order_id = source.order_id

WHEN MATCHED THEN

UPDATE SET *

WHEN NOT MATCHED THEN

INSERT *

Merge is useful for change data capture scenarios.

Step 7: Enable Schema Enforcement

Delta tables check schema consistency by default. If new data has extra columns, it will throw an error unless schema evolution is enabled.

To allow schema changes:

ALTER TABLE sales_data

SET TBLPROPERTIES (delta.autoMerge.enabled = true);

This supports evolving business needs.

Step 8: Use Time Travel

One powerful feature of delta tables is time travel.

You can query previous versions:

SELECT * FROM sales_data VERSION AS OF 3;

Or by timestamp:

SELECT * FROM sales_data TIMESTAMP AS OF ‘2026-01-01’;

Time travel helps with audits and debugging.

Step 9: Optimize Delta Tables

Over time, many small files can slow performance. Delta tables provide optimization features.

Run Optimize

OPTIMIZE sales_data;

This compacts small files into larger ones.

Use Z-Order

OPTIMIZE sales_data ZORDER BY (customer_id);

Z-order improves query speed for specific columns.

Step 10: Vacuum Old Files

Delta tables store history. To remove old files and reduce storage cost:

VACUUM sales_data RETAIN 168 HOURS;

This deletes old data files after retention period.

Best Practices for Using Delta Tables

To get maximum value from delta tables, follow these practices:

- Use clear naming conventions

- Organize tables into Bronze, Silver, and Gold layers

- Monitor file sizes

- Use merge for incremental updates

- Schedule optimization jobs

- Keep retention policies aligned with compliance needs

Delta Tables in Real Business Use Cases

Data Pipelines

Delta tables are ideal for batch and streaming pipelines. They support incremental processing.

Machine Learning

Data scientists can train models using versioned data.

Regulatory Reporting

Time travel allows teams to reproduce reports exactly as they were generated.

Energy and IoT Workloads

Sensor data from field devices can be stored and processed reliably.

Delta Tables vs Parquet Tables

Many teams ask why not use Parquet directly.

Parquet:

- Stores files

- No transaction log

- No built-in update support

Delta tables:

- Store files plus transaction log

- Support updates and deletes

- Provide schema checks

- Enable versioning

Delta tables offer better reliability.

Security and Governance with Delta Tables

Delta tables work well with governance tools such as Unity Catalog. This enables:

- Role-based access

- Audit trails

- Controlled data sharing

Security becomes easier to manage at scale.

Common Mistakes to Avoid

When using delta tables, avoid:

- Too many small files

- Full refreshes when incremental updates are possible

- Ignoring schema validation

- Skipping optimization jobs

Careful planning improves performance and cost control.

When Should You Use Delta Tables

You should use delta tables when:

- You need reliable updates

- You need historical versioning

- You want to support analytics and AI

- You need scalable data pipelines

- You require audit capability

They are ideal for modern lakehouse architectures.

Conclusion: How Tenplus Helps You Implement Delta Tables Correctly

Delta tables are powerful, but design matters. Poor structure can lead to slow performance and high cost.

Tenplus helps organizations design and implement delta tables as part of scalable data platforms. The team supports architecture planning, Medallion layering, optimization strategies, and governance integration.

Tenplus also offers a Free 15-Day Proof of Concept where businesses can test delta tables with real workloads and validate performance before scaling.

If your company is building a modern data platform using Databricks and Delta Lake, Tenplus provides the expertise to implement delta tables correctly, securely, and efficiently.

FAQs

What are delta tables used for?

Delta tables are used to store and manage structured data in a reliable way on cloud storage. They support updates, deletes, time travel, and schema checks. Many companies use delta tables for analytics, reporting, and machine learning workloads.

How are delta tables different from regular Parquet tables?

Parquet tables store files but do not track changes or support direct updates easily. Delta tables include a transaction log that ensures data consistency, version history, and safe merge operations. This makes delta tables more reliable for production data pipelines.

Can delta tables handle real-time data processing?

Yes. Delta tables support both batch and streaming workloads. They work well with streaming frameworks to process real-time data while maintaining data quality and consistency.